Prerequisites

- rLLM installed to the newest version (see this guide)

- Basic familiarity with Python

asyncioprogramming - Having a

TinkerAPI key (we use Tinker as the backend for this tutorial), andexport TINKER_API_KEY=<your_api_key>set in your environment

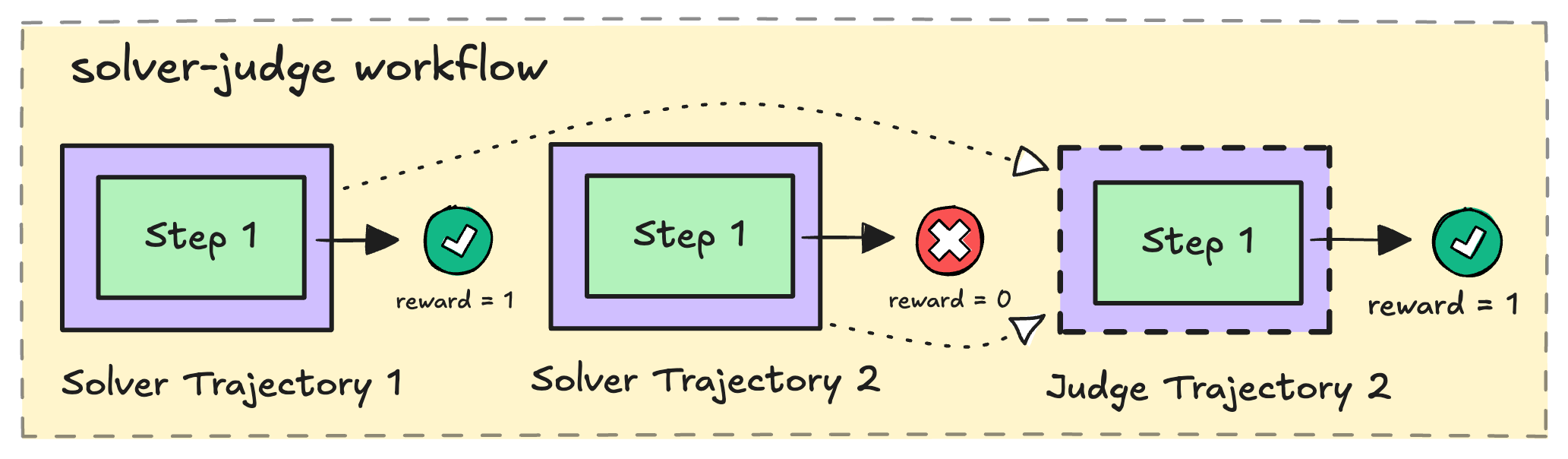

How the solver-judge workflow works

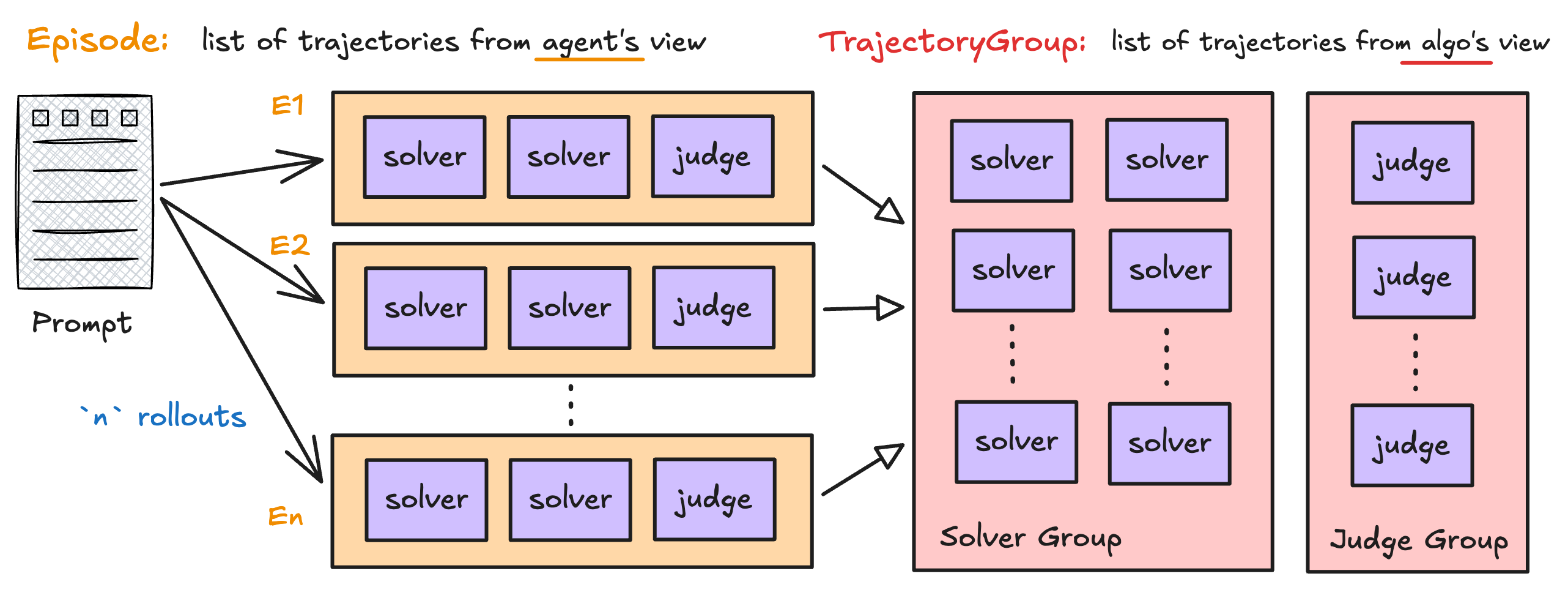

Here’s the high-level flow for a single task/prompt:- Solve —

Nsolver agents each receive the problem and generate a candidate solution in parallel. Below we takeN=2for simplicity. - Judge — A judge agent reviews all candidate solutions and selects the best one.

- Reward — Each solver receives a reward based on whether its solution is correct. The judge receives a reward based on whether it selected a correct answer.

- Return — The workflow packages everything into an

Episodethat the trainer uses to update the policy.

K rollouts per task, producing K x N solver trajectories and K judge trajectories — giving the RL algorithm plenty of signal to learn from.

A quick look at rLLM’s data model

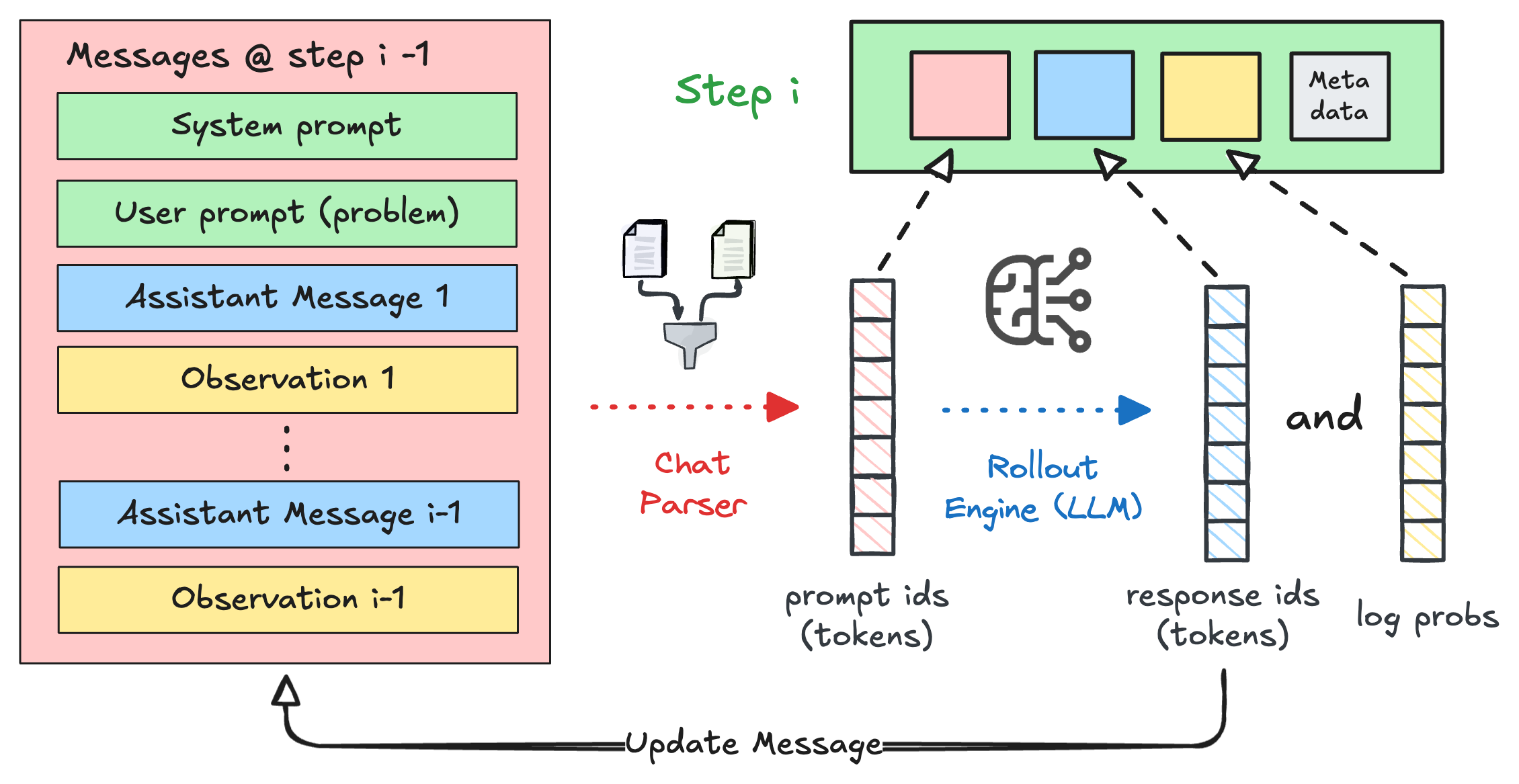

Before we start coding, let’s meet the three data structures you’ll be constructing in this tutorial. Think of them as nested containers — each one wraps the level below it.Step — one model interaction

AStep is the atomic unit: one call to the LLM. It captures the input tokens, the

generated output tokens, and the log-probabilities needed for training. At runtime, it

also carries higher-level context like chat messages, the model’s reasoning, and the

parsed action.

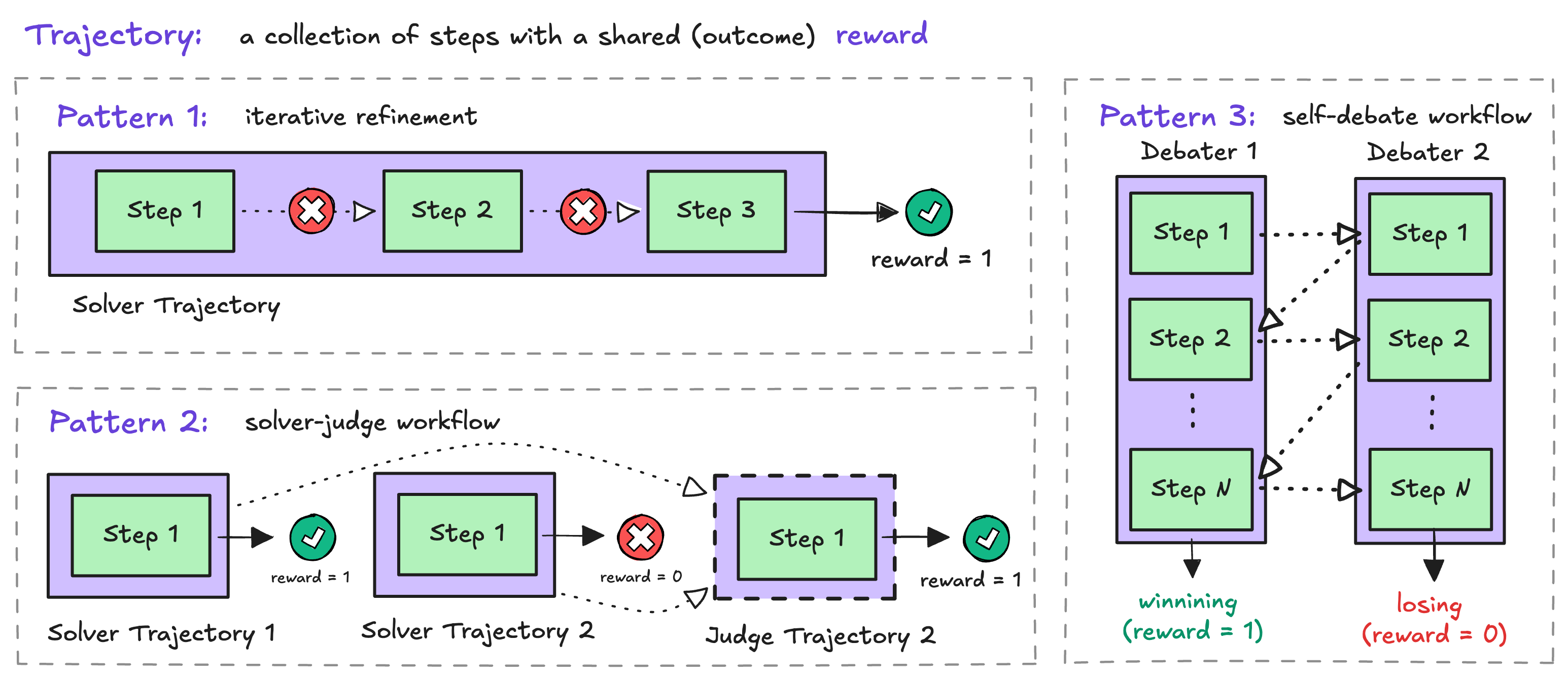

Trajectory — a role’s journey through the workflow

ATrajectory is an ordered list of Steps from a single role — for example, one

solver’s attempt or the judge’s evaluation. Each trajectory has a name (like

"solver" or "judge") that tells the trainer which trajectories to group together,

and a shared outcome reward.

Episode — the full picture from one rollout

AnEpisode is what your Workflow.run() method returns. It bundles all the

trajectories from a single rollout execution, along with metadata like is_correct

and custom metrics.

We’ll see each of these structures come to life as we build the workflow below.

Building the workflow

Define the Solver

The A few things to notice:

Solver takes a RolloutEngine and uses it to generate solutions. Each call

to generate_solution makes one LLM call and wraps the result in a Trajectory.- The trajectory is named

"solver"— this name is how rLLM groups trajectories during training. - The

Stepcaptures the full chat history (chat_completions), the model’s reasoning (thought), the parsed answer (action), and the raw model output for token-level training data. generate_solutionslaunches N solvers concurrently withasyncio.gather, so they run in parallel.

Define the Judge

The Same pattern as the solver — one LLM call, one

Judge receives the problem and all candidate solutions, then selects the best

one.Step, one Trajectory — but named "judge" instead. The judge’s action is the selected solution’s content (resolved from the index the model outputs), which makes it easy to evaluate with the same reward function used for solvers.Compose the workflow

Now we wire the solver and judge together in a Let’s walk through

Workflow subclass. The run()

method is where the magic happens.run():self.reset(task, uid)— Clears state from the previous task so this workflow instance can be reused.- Generate solutions — The solver runs

n_solutionsLLM calls in parallel and returns a list ofTrajectoryobjects. - Assign solver rewards — Each solver trajectory gets a reward based on whether its parsed answer is correct. This is the per-step reward that drives solver training.

- Judge selects — The judge sees all candidate solutions and picks one. Its reward depends on whether the selected solution is correct.

- Compute metrics —

solver_acc(fraction of correct solvers) andjudge_acc(1 if the judge picked correctly, 0 otherwise) are logged during training. - Return the Episode — All trajectories are bundled together. rLLM takes it from here.

The

Episode you return is the complete training signal for this task. rLLM handles

the rest — grouping trajectories by name, computing advantages, and updating the

policy.Training the workflow

With the workflow defined, you need two more pieces: a Python training script that wires everything together, and a shell script that launches training with the right configuration.Writing the training script

The simplest way to train a workflow is throughAgentTrainer, which wraps the

UnifiedTrainer and handles backend setup for you.

DatasetRegistry.load_dataset— Loads a built-in dataset. The countdown task asks the model to combine numbers with arithmetic to reach a target — a good testbed for reasoning.workflow_class+workflow_args— Tells the trainer which workflow to run and how to configure it. These args are passed to every workflow instance.backend="tinker"— Selects the Tinker backend for async training. Other options include"verl".traj_group_adv_estimator_map— This is the key to multi-role training. It assigns a different advantage estimator to each trajectory group by name.

Full training script on GitHub

Complete training script with AgentTrainer and per-role estimators

Writing the launch script

The training script uses Hydra for configuration, so you pass config overrides on the command line. A shell script keeps this manageable.| Group | Parameters | What they control |

|---|---|---|

| Data | train_batch_size, max_prompt_length, max_response_length | How many tasks per batch and token length limits |

| Model | model.path, actor.optim.lr | Which model to train and the learning rate |

| Rollout | rollout.n, rollout.temperature | Number of rollouts per task (K) and sampling temperature |

| Algorithm | adv_estimator | Default advantage estimator (overridden per-role by traj_group_adv_estimator_map) |

| Workflow | workflow.use_workflow | Must be True to enable the workflow engine |

| Training | total_epochs, test_freq, save_freq | Training duration and checkpoint/eval frequency |

The environment variables configure vLLM (the inference engine used during rollouts).

CUDA_VISIBLE_DEVICES controls which GPUs are used. Adjust these based on your

hardware setup.What happens during training

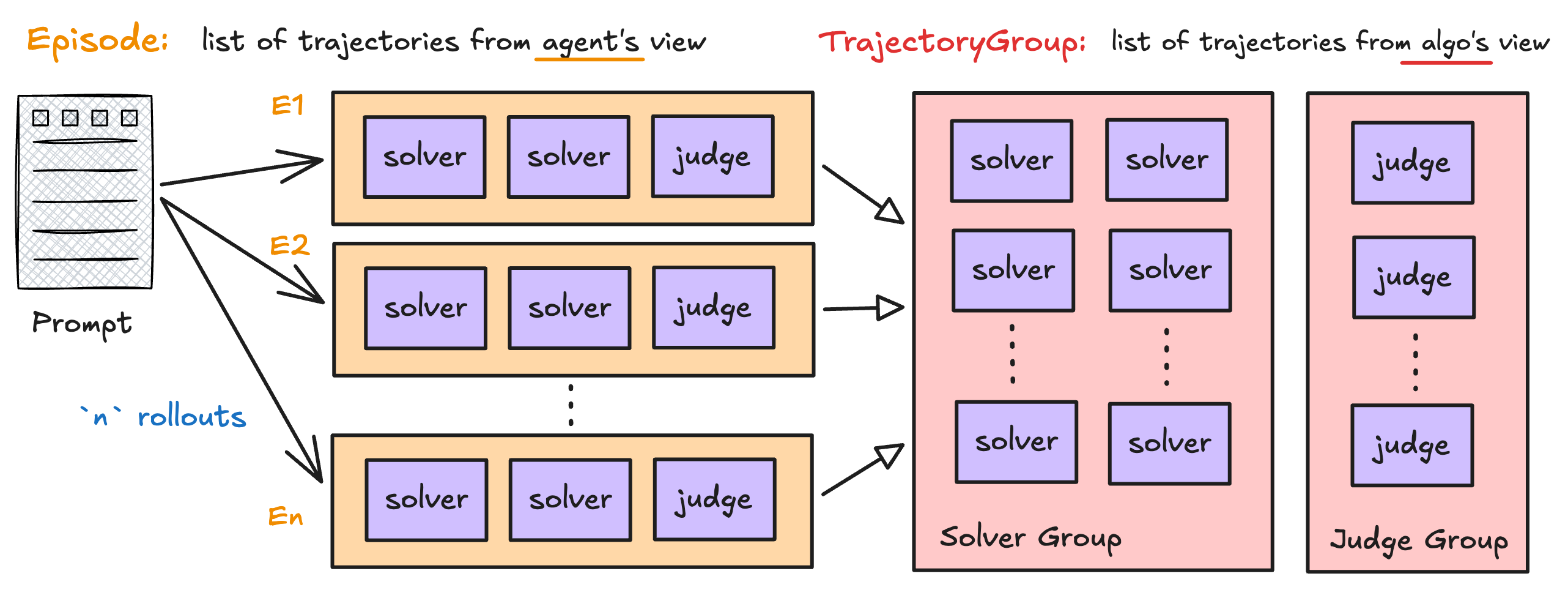

With your workflow and training script in place, here’s what the training loop does under the hood — tying back to the data model we introduced earlier. For each batch of tasks:-

Generate episodes — The engine runs your

SolverJudgeWorkflow.run()K times per task (controlled byrollout.n). Each run produces oneEpisodecontaining N solver trajectories + 1 judge trajectory. -

Group trajectories — Episodes are regrouped into

TrajectoryGroups by name. All solver trajectories for the same task end up in one group; all judge trajectories in another. This is the right side of the episode diagram:

- Compute advantages — Within each group, the advantage estimator compares trajectories. Solvers are compared via GRPO (relative ranking within the group), while judge trajectories use REINFORCE.

- Update the policy — The shared model is updated to increase the probability of high-advantage trajectories and decrease low-advantage ones.

-

Validate — Periodically, the engine runs validation rollouts (without training) and reports

solver_accandjudge_accmetrics.

Next steps

Unified trainer

Deep dive into the training loop architecture and 8-stage batch pipeline

Configuration

Full reference for all training, rollout, and algorithm config options

Advantage estimator

Customize how advantages are computed per role

Backend protocol

Implement a custom backend for your infrastructure