Repository: rllm-org/rllm-ui

Getting started

There are two ways to access rLLM UI:- Cloud — Use our hosted service at ui.rllm-project.com (see below).

- Self-hosted — Run locally from the repository (see below).

Cloud setup

Sign up

Sign up at ui.rllm-project.com.

| Variable | Required | Scope | Default | Description |

|---|---|---|---|---|

RLLM_API_KEY | Yes | Training script env | — | API key for authenticating training data ingestion (shown once at registration) |

RLLM_UI_URL | No | Training script env | https://ui.rllm-project.com | Defaults to cloud URL when RLLM_API_KEY is set |

The observability AI agent can be enabled by adding your

ANTHROPIC_API_KEY in the Settings page in the UI — no extra configuration needed.Self-hosted setup

http://localhost:5173 (or the port shown in the Vite output).

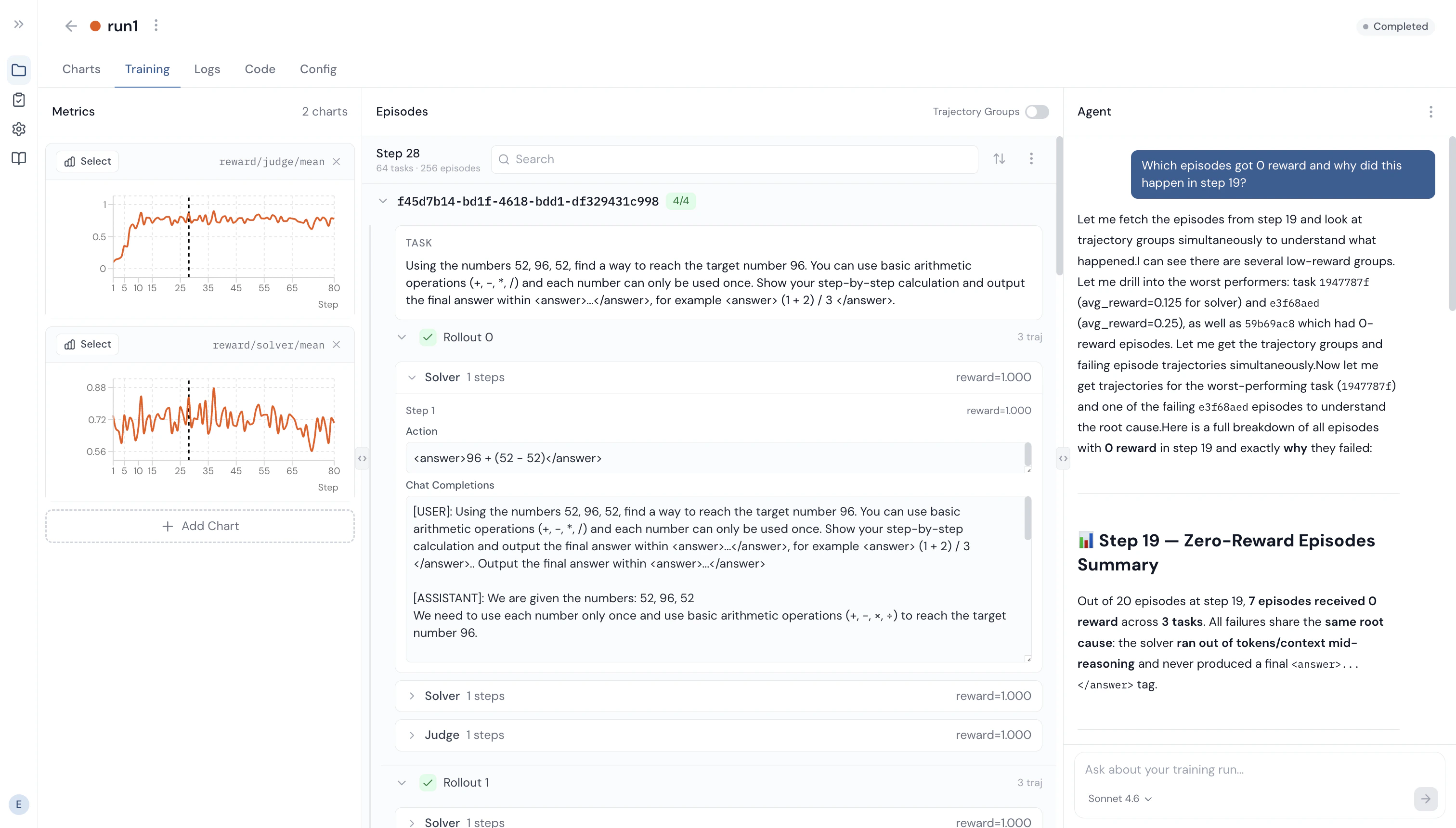

Database

rLLM UI stores sessions, metrics, episodes, trajectories, and logs in a database so they persist across restarts and are searchable.- SQLite (default) — No setup required. A local file (

api/rllm_ui.db) is created on first run. - PostgreSQL — Adds full-text search with stemming and relevance ranking. Set

DATABASE_URLinapi/.env:

Observability AI agent

To enable the agent, set your Anthropic API key inapi/.env:

Configuration

| Variable | Required | Scope | Default | Description |

|---|---|---|---|---|

RLLM_UI_URL | No | Training script env | http://localhost:3000 | URL of your local rllm-ui server |

DATABASE_URL | No | api/.env | SQLite | PostgreSQL connection string. Defaults to SQLite if unset. |

ANTHROPIC_API_KEY | No | api/.env | — | Enables the built-in AI agent |

VITE_API_URL | No | frontend/.env.development | http://localhost:3000 | Only needed if the API runs on a non-default port |

Connecting rLLM to UI

Training runs with script

Regardless of the service (cloud or self-hosted) you use, addui to your trainer’s logger list in your rLLM training script:

Training / Evaluation runs with rLLM CLI

If using our cloud service and rLLM CLI, you can run training and eval runs as such:How it works

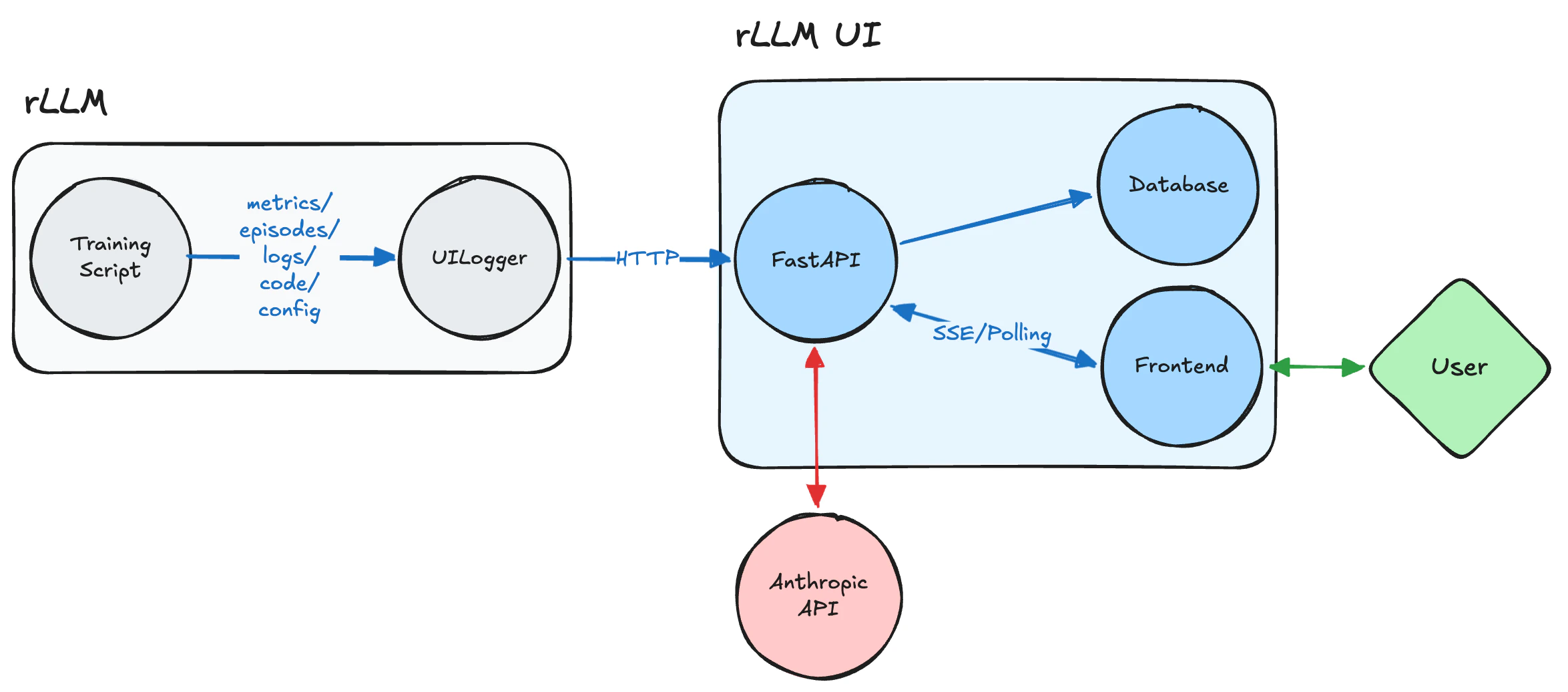

rLLM connects to the UI via theUILogger backend, registered as "ui" in the Tracking class (rllm/utils/tracking.py).

On init, the logger:

- Creates a training session via

POST /api/sessions - Starts a background heartbeat thread (for crash detection)

- Wraps

stdout/stderrwithTeeStreamto capture training logs